The quiet disappearance of boredom

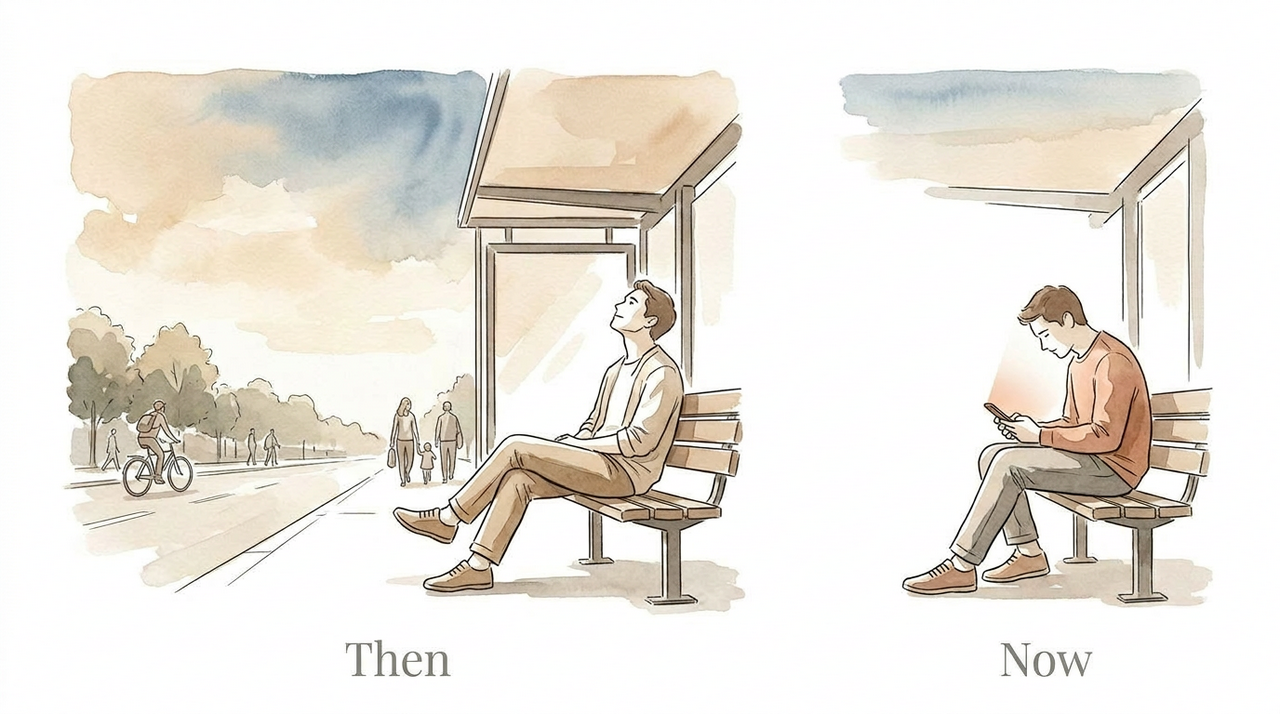

Fifteen years ago, if you were early to meet a friend, you'd just sit there. Watch people, daydream, or maybe stare at a wall.

Now you'd never even consider it. Your phone is out before you've sat down.

The disappearing in-between

It happens everywhere now. On the metro, in queues, at restaurants, at family dinners. A room full of people, all somewhere else. And this isn't generational anymore. It cuts across age groups. Everyone's in the same loop.

I catch myself less now, but I'm not immune. The instinct to fill every quiet moment with a screen is deep. It's muscle memory at this point.

Those in-between moments used to look different. People daydreamed. They looked out of bus windows. They struck up awkward conversations with strangers. They noticed things, a kid doing something funny, a weird shop name, a dog sleeping in the middle of the road, and it would put a small, private smile on their face.

These moments are small, but they connect you to the world around you in a way that no reel ever can.

We've traded all of that for a feed we won't remember by tomorrow.

It's not your fault (mostly)

Here's the thing most people don't realize: this isn't just a willpower problem. Your phone is engineered to be hard to put down.

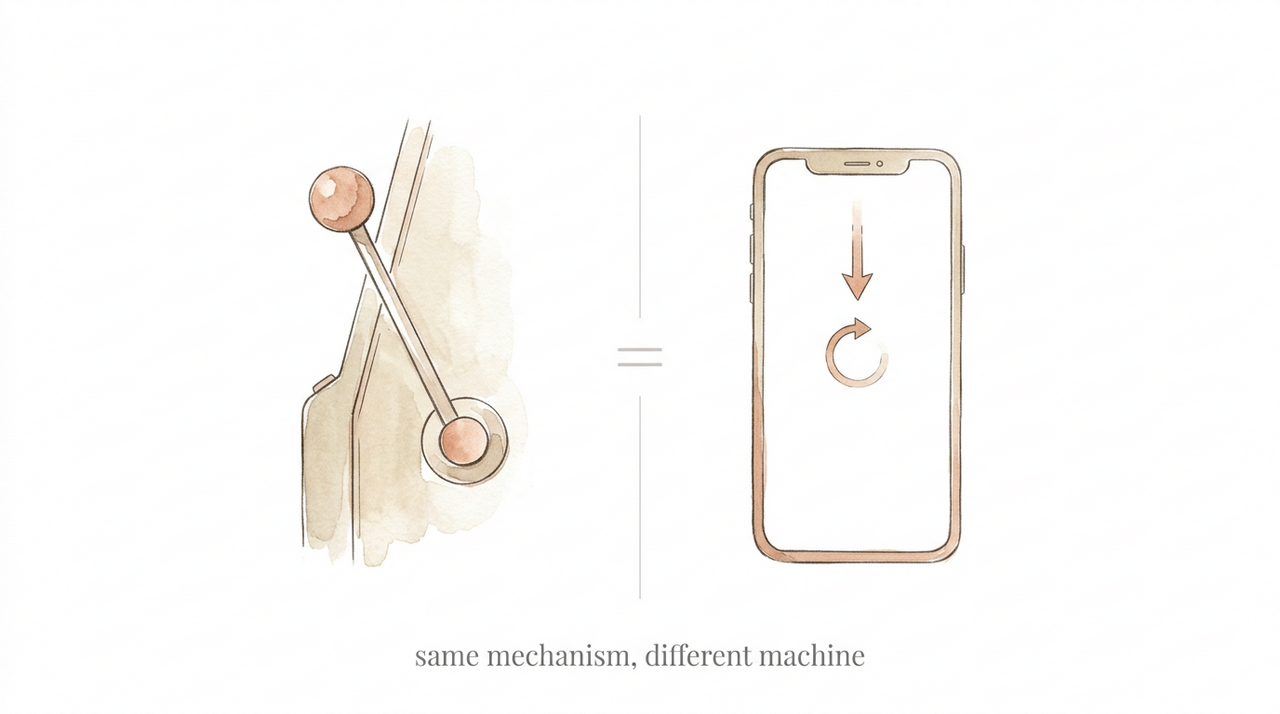

Tristan Harris, a former design ethicist at Google who went on to co-found the Center for Humane Technology, has compared smartphones to slot machines. Every time you pull down to refresh a feed, you're pulling a lever.

Maybe something interesting shows up. Maybe it doesn't.

That uncertainty is what keeps you going. It's the same psychological mechanism, called variable-ratio reinforcement, that makes gambling addictive.

Then there's infinite scroll, which was invented in 2006 by a designer named Aza Raskin.

His intent was simple: make browsing more seamless. But the feature removed every natural stopping point.

There's no bottom of the page. No moment where your brain gets a chance to ask, “do I actually want to keep going?”

Raskin has since expressed deep regret about his creation, estimating that infinite scrolling wastes roughly 200,000 human lifetimes per day.

Read that number again. 200,000 human lifetimes. Per day.

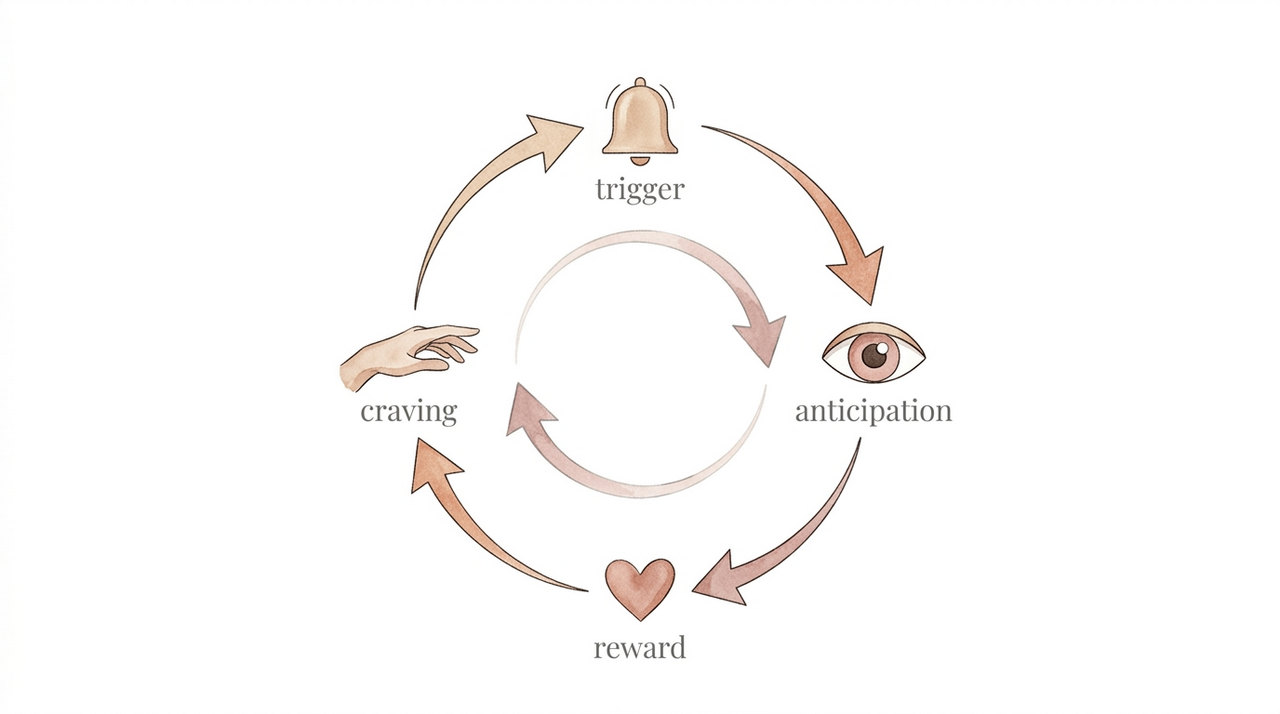

And it goes deeper than design tricks. Research from Stanford's addiction medicine clinic has found that smartphone use activates the same dopamine reward pathways as addictive substances.

Every notification, every new post, every like triggers a small hit of dopamine, enough to keep you coming back but never enough to feel satisfied.

As psychiatrist Anna Lembke puts it, with repeated use, the brain adapts by dialing down its own dopamine production.

Eventually, you're not reaching for your phone because it feels good. You're reaching for it to stop feeling bad.

The apps aren't designed to serve you. They're designed to keep you.

Three hours I didn't know I was losing

I quit Instagram a few years ago, around the time COVID hit. It wasn't a sudden decision. The thought had been sitting at the back of my head for a while. I started small, a digital detox over a weekend, then another one. Eventually, I just stopped going back.

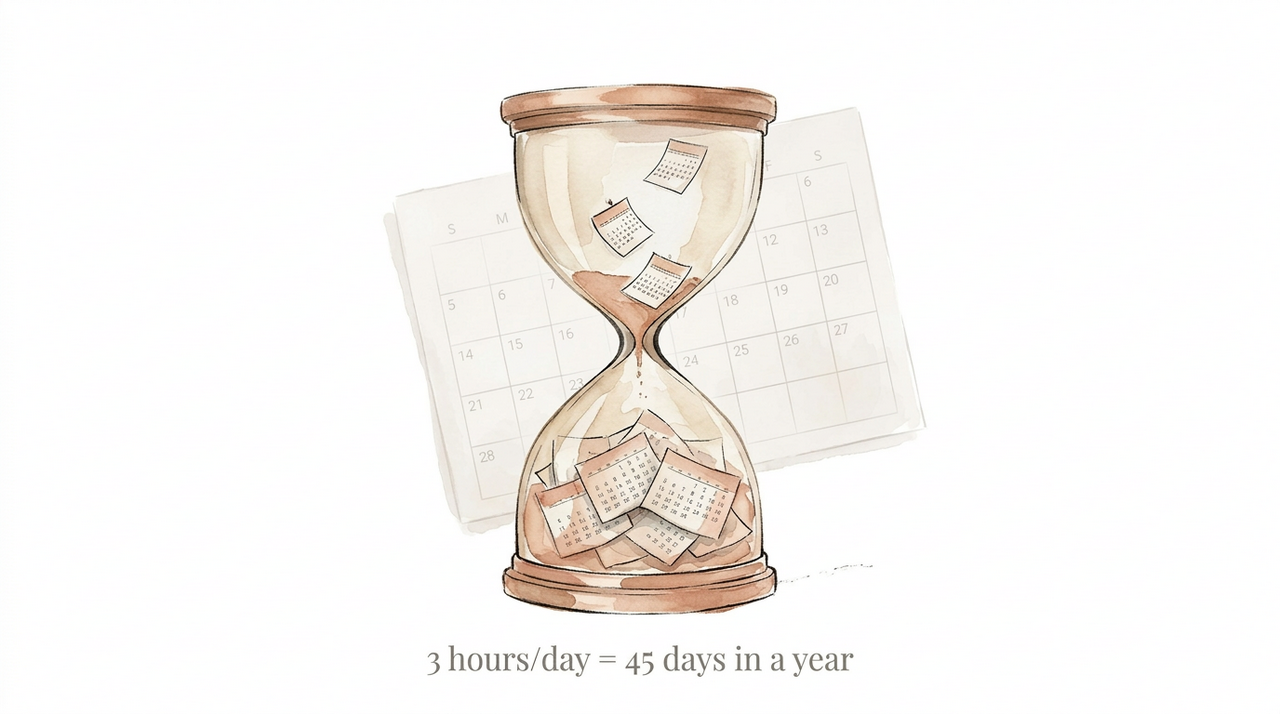

When I looked at my screen time, the number that stared back at me was close to three hours a day. Three hours. That's almost an entire afternoon, every single day, gone to a feed.

When I finally stopped, my days felt longer. Not in a drag, but in a “wait, it's only 7pm?” kind of way. I suddenly had time I didn't know I'd been missing.

Here's a simple experiment: go check your screen time right now. Not the total, just Instagram or YouTube or whatever your default scroll app is. Look at the daily average. Multiply it by 365. That number will probably unsettle you.

Being okay with not knowing

The most common pushback I get when I tell people I'm not on Instagram is some version of “but how do you keep up with what's happening?”

The honest answer: I don't, and I'm fine with it.

I miss a lot of stuff. I don't know what's trending. I find out about news late. None of it has mattered. Not once has missing a reel or a post had any real consequence on my life.

If something actually matters, if it involves someone I care about, the news finds its way to me. Either through them directly or through someone else. It always does. Everything else is noise. I have no interest in knowing what everyone had for dinner or where they went on vacation. And I have no interest in broadcasting my own life either.

FOMO is the fuel that keeps the machine running. You're so afraid of missing something online that you miss everything that's right in front of you.

Letting go of that turns out to be a surprisingly peaceful way to live.

The smallest shift

I'm not going to tell you to delete your apps. You've heard that sermon before and it doesn't work, partly because these apps are specifically designed to make quitting feel unbearable.

But the next time you're waiting for something, a bus, your food, a friend who's running late, try not reaching for your phone. Just for a few minutes.

See what you notice. See how it feels to just sit there with nothing to consume.

You might be bored. That's the point.

Boredom is where the good stuff lives.

***

Thanks for reading. Just notice something on your way home today!